For a number of years I’ve taught a studio course in our public history graduate program on designing interactive exhibits. Most academic historians present their work in monographs and journal articles unless they are way out there on the fringe, in which case they may be experimenting with trade publications, documentary film, graphic novels, photography, websites, blogs, games or even more outré genres. Typically the emphasis remains on creating representations that are intended to be read in some sense, ideally very carefully. Public historians, however, need to be able to communicate to larger and more disparate audiences, in a wider variety of venues, and in settings where they may not have all, or even much, of the attention of their publics. Exhibits that are designed merely to be read closely are liable to be mostly ignored. When that happens, of course, it doesn’t matter how interesting your interpretation is.

Students in the course learn how to embed their interpretations in interactive, ambient and tangible forms that can be recreated in many different settings. To give some idea of the potential, consider the difference between writing with a word processor and stepping on the brake of a moving car. While using a word processor you are focused on the task and aware that you are interacting with a computer. The interface is intricate, sensorimotor involvement is mostly limited to looking and typing, and your surrounding environment recedes into the background of awareness. On the other hand, when braking you are focused on your involvement with the environment. Sensorimotor experiences are immersive, the interface to the car is as simple as possible, and you are not aware that you are interacting with computers (although recent-model cars in fact have dozens of continuously operating and networked microcontrollers).

Academic historians have tended to emphasize opportunities for knowledge dissemination that require our audience to be passive, focused and isolated from one another and from their surroundings. When we engage with a broader public, we need to supplement that model by building some of our research findings into communicative devices that are transparently easy to use, provide ambient feedback, and are closely coupled with the surrounding environment. The skills required to do this come from a number of research fields that ultimately depend on electronics and computers. Thanks to the efforts of community-minded makers, hackers, and researchers, these techniques are relatively easy to learn and apply.

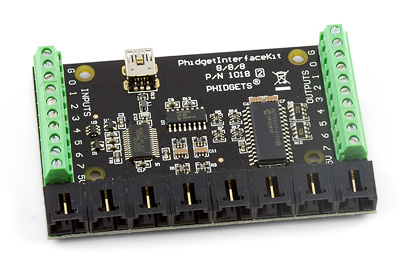

Physical computing. In order to make objects or environments aware of people, to make them responsive and interactive, we need to give them a better sense of what human beings are like and what they’re capable of (Igoe & O’Sullivan 2004; Igoe 2011). Suppose your desktop computer had to guess what you look like based on your use of a word processer. It could assume that you have an eye and an ear–because you respond to things presented on the screen and to beeps–and it could assume you have a finger–because you push keys on the keyboard. To dramatize this, I usually use the image above, which is based on a drawing in Igoe and O’Sullivan (2004). It looks horrible: people are nothing like that. By giving our devices a better sense of what we’re actually like, we make it possible for them to better fit into our ongoing lifeworlds.

Pervasive computing. We are at the point where computational devices are becoming ubiquitous, invisible, part of the surroundings (McCullough 2004). The design theorist Adam Greenfield refers to this condition as “everyware” (2006). A number of technologies work together to make this possible. Embedded microprocessors put the power of full computers into tiny packages. Micro-electro-mechanical systems (MEMS) include sensors and actuators to sense and control the environment. Radio transceivers allow these miniature devices to communicate with one another and get online. Passive radio frequency ID circuits (RFIDs) are powered by radio waves to transmit identifying information. All of these systems are mass-produced so that unit costs are very low, and it becomes possible to imagine practically everything being manufactured with its own unique identifier and web address. This scenario is sometimes called the “internet of things.” Someday instead of searching for your keys you may be able to Google for them instead. As Bruce Sterling notes, practically everything in the world could become the “protagonist of a documented process” (2005). Provenance has typically had to be reconstructed painstakingly for a tiny handful of objects. Most historians are not ready to conduct research in a world where every object can tell us about its own history of manufacture, ownership, use, repair, and so on. Dealing with pervasive computation will require the ability to quickly focus on essential information, to relegate non-essential information to peripheral awareness, and to access information in the places and settings where it can make a difference.

Interaction Design. The insinuation of computation and interactivity into every conceivable setting has forced designers to abandon the traditional idea of “human-computer interaction,” and to take a much more expansive perspective instead (Moggridge 2006; Saffer 2006). Not only is everything becoming a potential interface, but many smart devices are better conceptualized as mediating between people, rather than between person and machine. Services like ordering a cup of coffee at Starbucks are now designed using the same techniques as those used to create interactive software (e.g., Google calendar) and hardware (e.g., the iPod). In order to benefit from the lessons of interaction design, historians will have to take into account the wide range of new settings where we can design experiences and shape historical consciousness. The technology of tangible computing provides a link between pervasive devices, social interaction, and the material environment (Dourish 2004).

Desktop Fabrication. Most radical of all, everything that is in digital form can be materialized, via machines that add or subtract matter. The former include a range of 3D printing technologies that deposit tiny amounts of glue, plastic or other materials, or that use lasers to selectively fuse small particles of metal, ceramic or plastic. The latter include computer-controlled milling machines, lathes, drills, grinders, laser cutters and other tools. The cost of these devices has been dropping rapidly, while their ease-of-use increases. The physicist Neil Gershenfeld has assembled a number of “fab labs”—universal fabrication laboratories—from collections of these devices. At present, a complete fab lab costs around $30-$40,000 and a few key machines are considerably cheaper (Gershenfeld 2000, 2007). Enthusiasts talk about the possibility of downloading open source plans and “printing out” a bicycle, an electric guitar, anything really. An open source hardware community is blossoming, aided in part by O’Reilly Media’s popular MAKE magazine and by websites like Instructables and Thingiverse. Desktop fabrication makes it possible to build and share custom interactive devices that communicate our knowledge in novel, material forms.

References

- Dourish, Paul. Where the Action Is: The Foundations of Embodied Interaction. Cambridge, MA: MIT, 2004.

- Gershenfeld, Neil. When Things Start to Think. New York: Holt, 2000.

- Gershenfeld, Neil. Fab: The Coming Revolution on Your Desktop—From Personal Computers to Personal Fabrication. New York: Basic, 2007.

- Greenfield, Adam. Everyware: The Dawning Age of Ubiquitous Computing. Berkeley, CA: New Riders, 2006.

- Igoe, Tom. Making Things Talk, 2nd ed. Sebastopol, CA: O’Reilly, 2011.

- Igoe, Tom and Dan O’Sullivan. Physical Computing: Sensing and Controlling the Physical World with Computers. Thomson Course Technology, 2004.

- McCullough, Malcolm. Digital Ground: Architecture, Pervasive Computing and Environmental Knowing. Cambridge, MA: MIT, 2004.

- Moggridge, Bill. Designing Interactions. Cambridge, MA: MIT, 2006.

- Norretranders, Tor. The User Illusion: Cutting Consciousness Down to Size. New York: Penguin, 1999.

- Saffer, Dan. Designing for Interaction: Creating Smart Applications and Clever Devices. Berkeley, CA: New Riders, 2006.

- Sterling, Bruce. Shaping Things. Cambridge, MA: MIT, 2005.

- Torrone, Phillip. “Open Source Hardware, What Is It? Here’s a Start…” MAKE: Blog (23 Apr 2007).

You must be logged in to post a comment.