If you are just getting started with online research, there are some things that are handy to know, and a few tools you might like to set up for yourself.

Analog and digital. When I talk to my students about the difference between analog and digital representations, I use the example of two clocks. The first is the kind that has hour and minute hands, and perhaps one for seconds, too. At some point you learned how to tell time on an analog clock, and it may have seemed difficult. Since the clock takes on every value in between two times, telling time involves a process of measurement. You say, “it’s about 3:15,” but the time changes continuously as you do so. Telling time with a digital clock, by contrast, doesn’t require you to do more than read the value on the display. It is 3:15 until the clock says it is 3:16. Digital representations can only take on one of a limited (although perhaps very large) number of states. Not every digital representation is electronic. Writing is digital, too, in the sense that there are only a finite number of characters, and instances of each are usually interchangeable. You can print a book in a larger font, or in Braille, without changing the meaning. Salvador Dalí’s melting clocks, however, would keep different time–which was the point, of course.

The costs are different. Electronic digital information can be duplicated at near-zero cost, transmitted at the speed of light, stored in infinitesimally small volumes, and created, processed and consumed by machines. This means that ideas that were more-or-less serviceable in the world before networked computers–ideas about value, property rights, communication, creativity, intelligence, governance and many other aspects of society and culture–are now up for debate. The emergence of new rights regimes (such as open access, open content and open source) and the explosion of new information are manifestations of these changing costs.

You won’t be able to read everything. Estimates of the amount of new information that is now created annually are staggering (2003, 2009). As you become more skilled at finding online sources, you will discover that new material on your topic appears online much faster than you can read it. The longer you work on something, the more behind you will get. This is OK, because everyone faces this issue whether they realize it or not. In traditional scholarship, scarcity was the problem: travel to archives was expensive, access to elite libraries was gated, resources were difficult to find, and so on. In digital scholarship, abundance is the problem. What is worth your attention or your trust?

Assume that what you want is out there, and that you simply need to locate it. I first found this advice in Thomas Mann’s excellent Oxford Guide to Library Research. Although Mann’s book focuses primarily on pre-digital scholarship, his strategies for finding sources are more relevant than ever. Don’t assume that you are the best person for the job, either. Ask a librarian for help. You’ll find that they tend to be nicer, better informed, more helpful and more tech savvy than the people you usually talk to about your work. Librarians work constantly on your behalf to solve problems related to finding and accessing information.

The first online tool you should master is the search engine. The vast majority of people think that they can type a word or two into Google and choose something from the first page of millions of results. If they don’t see what they’re looking for, they try a different keyword or give up. When I talk to scholars who aren’t familiar with digital research, their first assumption is often that there aren’t any good online resources for their subject. A little bit of guided digging often shows them that this is far from the truth. So how do you use search engines more effectively? First of all, sites have an advanced search page that lets you focus in on your topic, exclude search terms, weight some terms more than others, limit your results to particular kinds of document, to particular sites, to date ranges, and so on. Second, different search engines introduce different kinds of bias by ranking results differently, so you get a better view when you routinely use more than one. Third, all of the major search engines keep introducing new features, so you have to keep learning new skills. Research technique is something you practice, not something you have.

Links are the currency of the web. Links make it possible to navigate from one context to another with a single click. For human users, this greatly lowers the transaction costs of comparing sources. The link is abstract enough to serve as means of navigation and able to subsume traditional scholarly activities like footnoting, citation, glossing and so on. Furthermore, extensive hyperlinking allows readers to follow nonlinear and branching paths through texts. What many people don’t realize is that links are constantly being navigated by a host of artificial users, colorfully known as spiders, bots or crawlers. A computer program downloads a webpage, extracts all of the links and other content on it, and follows each new link in turn, downloading the pages that it encounters along the way. This is where search engine results come from: the ceaseless activity of millions of automated computer programs that constantly remake a dynamic and incomplete map of the web. It has to be this way, because there is no central authority. Anyone can add stuff to the web or remove it without consulting anyone else.

The web is not structured like a ball of spaghetti. Research done with web spiders has shown that a lot of the most interesting information to be gleaned from digital sources lies in the hyperlinks leading into and out of various nodes, whether personal pages, documents, archives, institutions, or what have you. Search engines provide some rudimentary tools for mapping these connections, but much more can be learned with more specialized tools. Some of the most interesting structure is to be found in social networks, because…

Emphasis is shifting from a web of pages to a web of people. Sites like Blogger, WordPress, Twitter, Facebook, Flickr and YouTube put the emphasis on the contributions of individual people and their relationships to one another. Social searching and social recommendation tools allow you to find out what your friends or colleagues are reading, writing, thinking about, watching, or listening to. By sharing information that other people find useful, individuals develop reputation and change their own position in social networks. Some people are bridges between different communities, some are hubs in particular fields, and many are lonely little singletons with one friend. This is very different from the broadcast world, where there were a few huge hubs spewing to a thronging multitude (known as “the audience“).

Ready to jump in? Here are some things you might try.

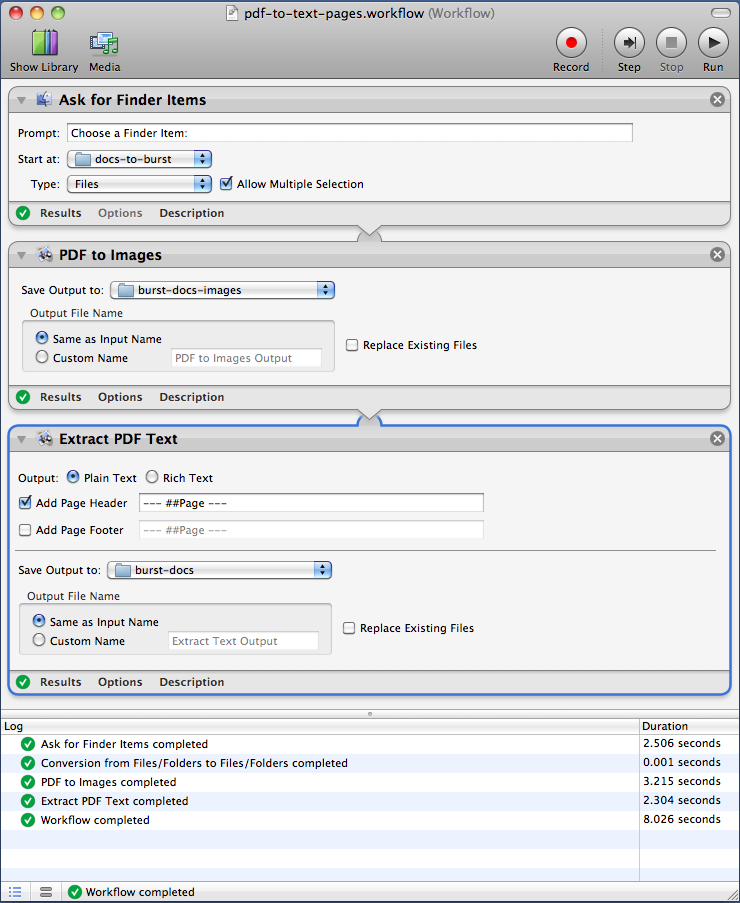

Customize a browser to make it more useful for research

- Install Firefox

- Add some search extensions

- Worldcat

- Internet Archive

- Project Gutenberg

- Merriam-Webster

- Pull down the search icon and choose “Manage Search Engines” -> “Get more search engines” then search for add-ons within Search Tools

- Try search refinement

- Install the Deeper Web add-on and try using tag clouds to refine your search

- Example. Suppose you are trying to find out more about a nineteenth-century missionary named Adam Elliot. If you try a basic Google search, you will soon discover there is an Australian animator with the same name. Try using Deeper Web to find pages on the missionary.

- Add bookmarks for advanced search pages to the bookmark toolbar

- Google Books (note that you can set to “full view only” or “full view and preview”

- Historic News (experiment with the timeline view)

- Internet Archive

- Hathi Trust

- Flickr Commons (try limiting by license)

- Wolfram Alpha (try “China population”)

- Google Ngram Viewer

- You can block sites that have low quality results

Work with citations

- Install Zotero

- Try grabbing a record from the Amazon database

- Use Zotero to make a note of stuff you find using Search Inside on your book

- Under the info panel in Zotero, use the Locate button to find a copy in a library near you

- From the WorldCat page, try looking for related works and saving a whole folder of records in Zotero

- Find the book in a library and save the catalog information as a page snapshot

- Go into Google Books, search for full view only, download metadata and attach PDF

- Learn more about Zotero

- Explore other options for bibliographic work (e.g. Mendeley)

Find repositories of digital sources on your topic

Here are a few examples for various kinds of historical research.

- Canada

- Canadiana.org

- Dictionary of Canadian Biography online

- Repertory of Primary Source Databases

- Our Roots

- British Columbia Digital Library

- Centre for Contemporary Canadian Art

- US

- Library of Congress Digital Collections

- American Memory

- NARA

- Calisphere

- NYPL Digital Projects

- UK and Europe

- Perseus Digital Library

- UK National Archives

- Old Bailey Online

- London Lives

- Connected Histories

- British History Online

- British Newspapers 1800-1900

- English Broadside Ballad Archive

- EuroDocs

- Europeana

- The European Library

- Gallica

- Thematic (e.g., history of science and medicine)

- Complete Works of Charles Darwin Online

- Darwin Correspondence Project

- National Library of Medicine Digital Projects

Capture some RSS feeds

- Install the Sage RSS feed reader in Firefox (lots of other possibilities, like Google Reader)

- Go to H-Net and choose one or more lists to monitor; drag the RSS icons to the Sage panel and edit properties

- Go to Google News and create a search that you’d like to monitor; drag RSS to Sage panel and edit properties

- Go to Hot New Releases in Expedition and Discovery at Amazon and subscribe to RSS feed

- Consider signing up for Twitter, following a lot of colleagues and aggregating their interests with Tweeted Times (this is how Digital Humanities Now works)

Discover some new tools

- The best place to start is Lisa Spiro’s Getting Started in the Digital Humanities and Digital Research Tools wiki

You must be logged in to post a comment.